Stop Renting Cloud VRAMs. Own Your Execution Pipeline.

Enterprise-grade NVIDIA accelerators architected for continuous, zero-throttling local inference and fine-tuning.

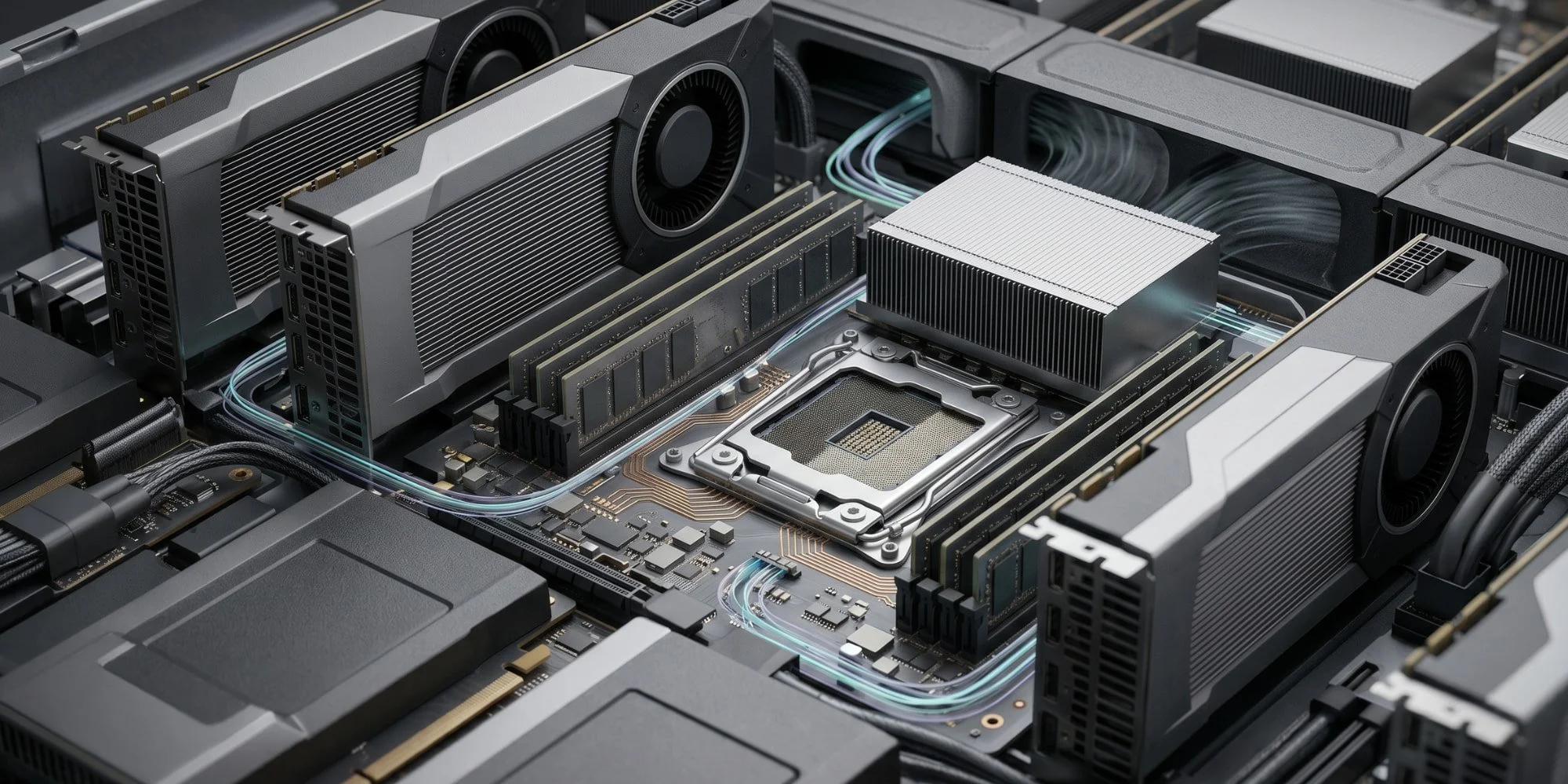

How We Architect Your Compute

In AI infrastructure, VRAM is your hard limit, but thermal physics dictates your speed. Stacking consumer gaming cards together results in suffocated hardware and throttled clock speeds. We do not build gaming PCs. We engineer high-density compute nodes using strict Active Blower architectures (RTX Ada and Blackwell generations) to ensure your models run at 100% capacity, 24/7, without thermal degradation.

The Reality of Thermal Physics

•Zero-Offload VRAM Scaling: From 24GB entry points to massive 192GB pooled-VRAM arrays, keeping your entire model resident in memory and eliminating data transfer latency.

• Blower-Fan Physics: We utilize server-grade active exhaust cooling. This physically forces hot air out of the rear of the chassis, allowing multi-GPU density without cross-card heat soak.

• Precision Tiering: Whether you are prototyping lightweight coding assistants or running massive Sovereign LLMs, we scale the compute engine precisely to your batch size and parameter count.